Ambroise Odonnat

In front of TUM in Munich

I am a second-year Ph.D. student in Paris between Huawei Noah’s Ark Lab & Inria supervised by Romain Tavenard, Laetitia Chapel, and Ievgen Redko.

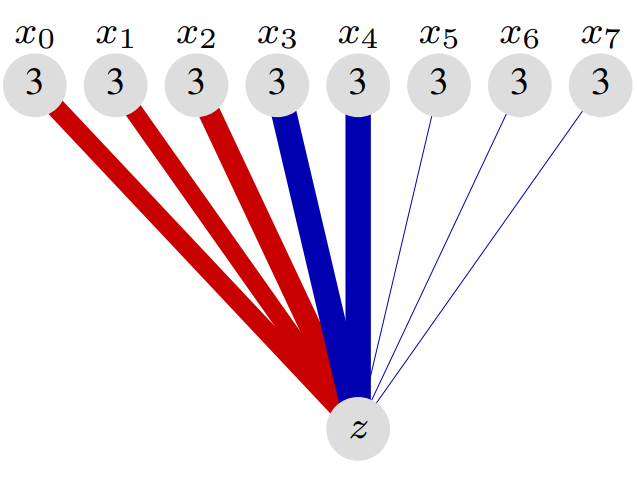

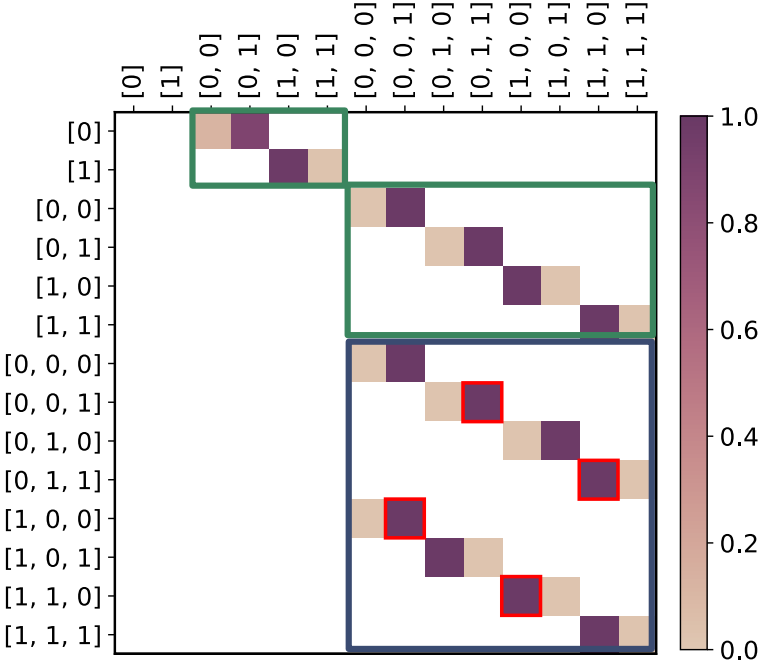

My research interests revolve around understanding representation learning and generalization in transformers through theoretical analysis and large-scale experiments on:

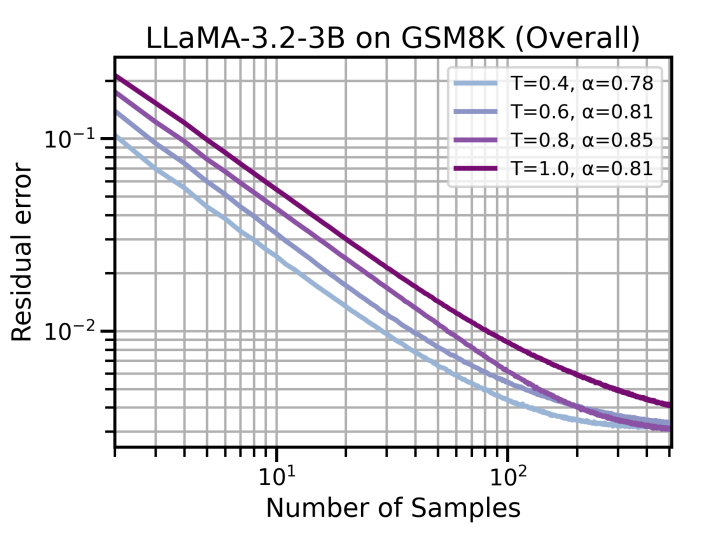

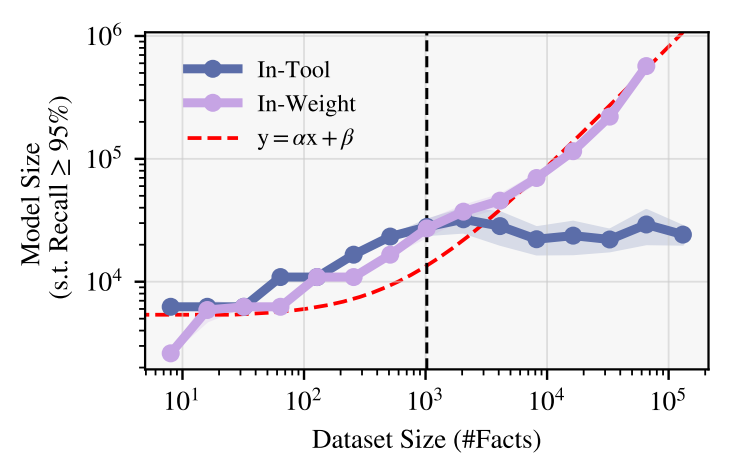

- Large language models (e.g., here and here)

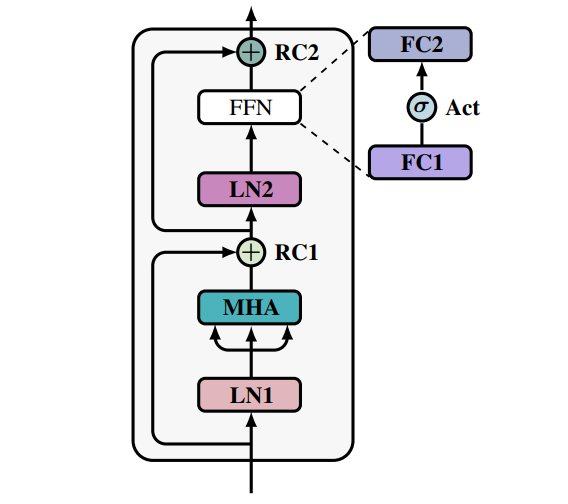

- Vision transformers (e.g., here and here)

- Time series foundation models (e.g., here)

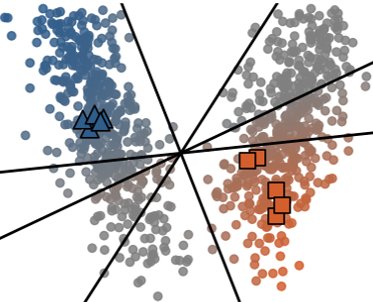

- Out-of-distribution generalization (e.g., here and here)

I was lucky to receive an ICML Oral Award, an ICASSP Oral Award, and a QBIN Best Flash Talk Award for my research in these areas. On a more amusing (and surprising 🙃) note, one of my recent articles was featured in Forbes.

I enjoy working both with a few collaborators and as part of a larger team, contributing to open-source libraries and communicating about my research. I maintain a research blog, logB, and have had the privilege of presenting my research at leading institutions such as EPFL, Mila, Imperial, Cohere, and Kyutai.

I graduated from Ecole des Ponts ParisTech in 2023 and hold a master’s degree from ENS Paris-Saclay in Mathematics, Vision, and Machine Learning (MVA).

Don’t hesitate to reach out for possible collaborations or questions regarding my research!

news

| May 01, 2026 | 🥳 2 papers accepted at ICML (on LLM reasoning and ViT finetuning)! |

|---|---|

| Apr 27, 2026 | 🍍2 papers accepted at ICLR workshops (on LLM tool use and probing ViT)! |

| Mar 27, 2026 | 🤗 Very happy to give a talk at Mila on the role of smoothness in ViT finetuning! |

| Feb 06, 2026 | 📑 New preprint on the role of smoothness in Vision Transformer finetuning. |

| Dec 07, 2025 | 🥳 Very happy to co-organize the NeurIPS BERTs workshop on TSFMs! |